Video Enhance AI v2.3 at a glance

- New Chronos AI model for frame rate conversion – Easily adjust motion in your clips by slowing them down by up to 2000%. Convert the frame rate of your source footage to conform to your project requirements (e.g. 23.97 fps → 30 fps) or add even smoother motion by converting to higher frame rates (e.g. 60 fps or 120 fps)

- New Proteus AI model for fine-tuned enhancement control – Get even more control over the output quality of your clips with six customizable sliders. New controls help reduce halos from over-sharpening, recover lost detail due to compression, and minimize aliasing that can result in less sharp footage.

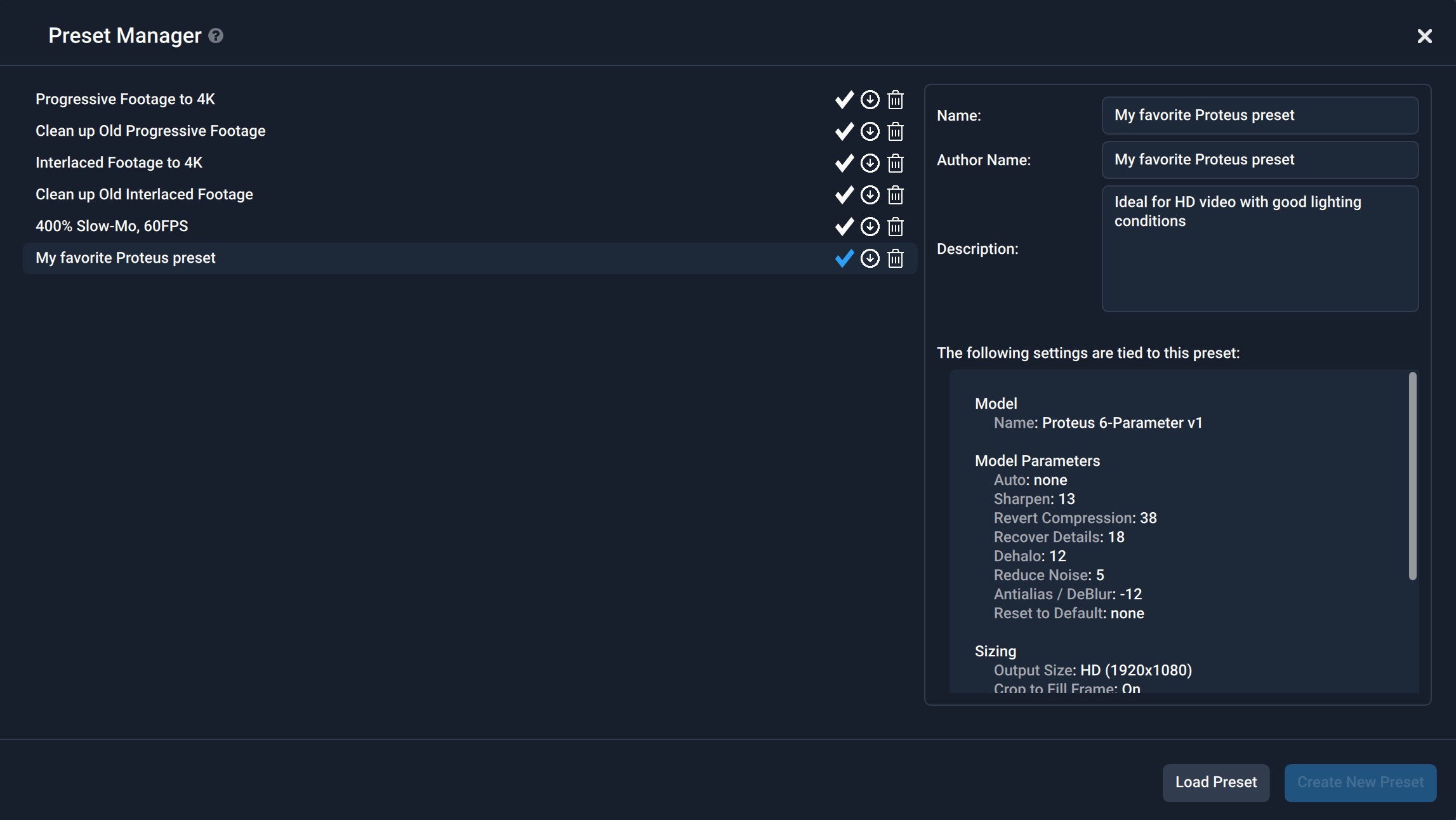

- New presets manager – Save and load specific settings for the AI models you use most. Download, share, and import presets with other Video Enhance AI users.

- AI model picker – Select the options that best represent your source clip and we’ll provide you with the AI models that can help enhance it.

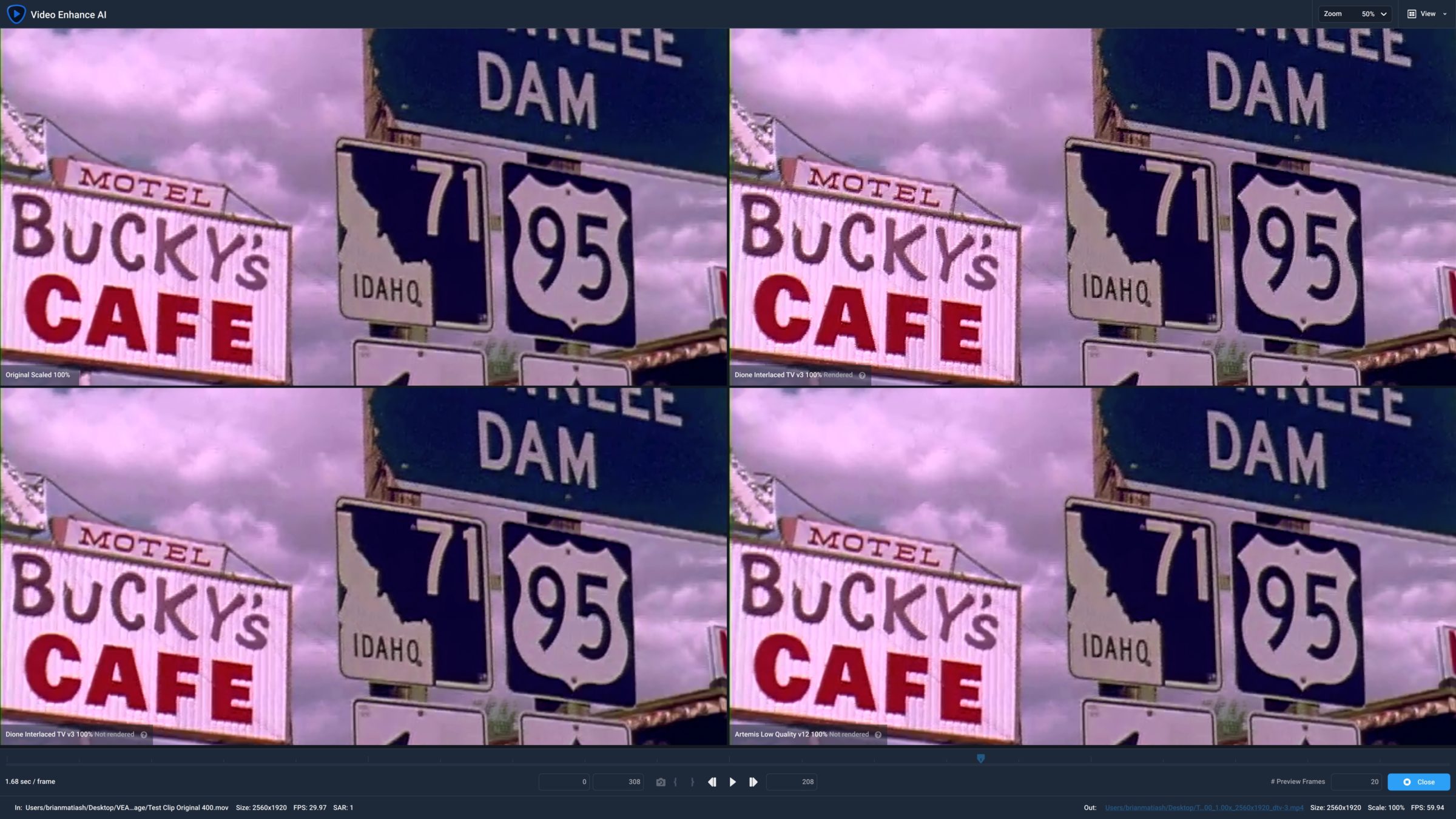

- Comparison View – Compare the results of three AI models alongside your original clip in one convenient view. Then select the best one to apply to your footage without changing views.

- Performance and usability improvements – We’ve made many improvements to our AI engine for better speed and stability on across a range of hardware, as well as included several helpful usability improvements.

New Chronos AI model for frame rate conversion

Realistic slow motion made easy

With most other video editing applications, slowing down footage from a 24 fps clip would result in distracting stutters and dropped frames. However, Chronos leverages the power of AI to create beautiful slow motion results regardless of the source frame rate.

Watch how to use Chronos to slow down your video, convert frame rate, and learn best practices to ensure you get great results:

Take the guesswork out of frame rate conversion

Working with clips that have varying frame rates is a reality in video editing and the process of conforming them to your project requirements used to be a daunting task. Now, Chronos will take care of the heavy lifting involved with realistically converting the frame rate of your source clips to conform to your project requirements. Adjust a clip’s frame rate to 60, 100, or 120 fps to get even smoother motion blur between frames.

Let’s take a look at how the new Chronos AI model handles frame rate conversion compared to another leading video editing application, Apple Final Cut Pro. The source video was filmed at 24 fps. On the left half of the video below, we let Final Cut Pro convert the original 24 fps clip to 60 fps. Notice the slight jitter, especially when the speed of the UAV increases. For the right half of the video, we used Chronos to perform the same 60 fps conversion. Notice how much smoother the motion is when using Chronos as opposed to Final Cut Pro.

New Proteus AI model for fine-tuned enhancement control

One of the most requested features we’ve received is to provide greater control over how to improve your video clips. The new Proteus AI model was built specifically to meet this request. Control six discrete sliders to fine-tune de-blocking, detail recovery, sharpening, noise reduction, de-haloing, and anti-aliasing. Select the most ideal frame in your clip and click the “Auto” button, letting our AI engine analyze the selected frame and provide recommended settings based on what’s displayed.

New presets manager

Quickly load your favorite AI model settings

If you’ve found yourself constantly having to select the same AI model and settings over and over, you’re going to love the new Preset Manager. Configure the settings of your favorite AI model, save that snapshot as a preset, and quickly load on subsequent projects. Share your favorite presets with other Video Enhance AI users and import presets shared with you.

New AI model picker and comparison view

Choose the right AI models with confidence

It can sometimes be challenging to decide which AI model would work best with your video clip. To make that task easier, we’ve built two helpful features: the AI model selector and the comparison view. With the AI model selector, choose the type of clip you want to enhance and which issue most affects it. We’ll suggest three AI models that are best suited for the task based on your selections. On top of that, a new comparison view conveniently displays those three recommended AI models simultaneously, giving you even more confidence when choosing the best one for your clip.

Performance and usability improvements

Thanks to improvements to our AI Engine pipeline, you’ll experience a 50% performance boost with NVIDIA GeForce GTX GPUs and up to 3x faster on Apple M1 silicon computers. We’ve also included a number of usability improvements directly based on user feedback including displaying the process completion time in “hours:minutes:seconds” instead of just seconds and adding the ability to switch between showing frames and timecodes in the UI.

We’re constantly striving to make Video Enhance AI smarter and more effective when improving the quality of your video footage, whether it’s bringing out details from your old family videos, upscaling a Zoom video recording, or adjusting the resolution, speed and frame rate of cinematic footage. Be sure to tag us on social media and use #TopazVEAI so that we can see your amazing work! If you don’t yet own Video Enhance AI, purchase a copy or download a free trial.